How to Calculate ROI for AI Content Workflows

AI boosts writer productivity 14% on average, 34% for novices (NBER, 5,179 agents). Calculate content ROI with research-backed inputs and practical benchmarks.

TL;DR: AI tools boost writer productivity 14% on average and 34% for novices (NBER, 5,179 agents studied). Calculate your content ROI by measuring quality-adjusted output, not just speed gains.

At a Glance

A 2024 NBER study found that access to a generative AI tool increased worker productivity by 14% on average, with a 34% improvement for novice workers (NBER Working Paper 31161). Yet Columbia SIPA concluded that a reliable ROI framework for generative AI "is not possible at the moment" due to data scarcity (Boehmer, 2024). The gap between measurable productivity gains and reliable ROI calculation defines the current state of the AI content workflow — and the inputs you need to measure it honestly.

About the Author

Daniel Agrici is Co-Founder at Rankenstein, where he oversees product development and AI-assisted content strategy. With over eight years in technical SEO and content automation, Daniel has led content operations for B2B SaaS companies across fintech, healthtech, and enterprise software verticals. He writes about the intersection of AI tools and editorial quality.

How Much Does AI Actually Improve Content Productivity?

An NBER study by Brynjolfsson, Li, and Raymond measuring 5,179 customer support agents found that access to a generative AI assistant increased productivity by 14% on average. Novice and low-skilled workers saw a 34% improvement, while experienced workers saw minimal impact (NBER Working Paper 31161, 2024).

A separate NBER study by Bick, Blandin, and Deming found that nearly 40% of the US population aged 18-64 uses generative AI. Among employed respondents, 23% used it for work at least once per week, and 9% used it every workday. Respondents reported time savings equivalent to 1.4% of total work hours (NBER Working Paper 32966, 2024).

McKinsey's analysis of 63 use cases estimated that generative AI could add $2.6 to $4.4 trillion in annual value across industries. For marketing and sales specifically, generative AI could increase productivity by 5-15% of total marketing spend (McKinsey, 2023).

These numbers matter because they set realistic expectations. A 14% average productivity gain is significant. A 5-15% marketing efficiency improvement is meaningful. Neither is the "300-500% increase" that AI vendors often claim without citation.

Why Is Calculating AI Content ROI So Difficult?

Columbia SIPA's research by Boehmer found that calculating a framework for generative AI ROI "is not possible at the moment" because not enough firms have implemented the technology, the technology is too nascent, and associated data is too scarce. The paper concluded that "any actor attempting to create an ROI for Generative AI at this time will face the same challenges" (Columbia SIPA, 2024).

The difficulty breaks down into three layers. First, the inputs are hard to isolate. When an AI tool helps draft an article that then gets edited, optimized, and published by humans, attributing productivity gains to the AI alone requires controlled experiments most marketing teams cannot run.

Second, the outputs are delayed. Content ROI materializes over months as articles rank, earn backlinks, and drive conversions. A draft completed 40% faster today may generate zero value if it fails to rank, or significant value if it captures a high-intent keyword three months later.

Third, Gartner found that 77% of marketers explore generative AI, but only 44% realize significant benefits (Gartner, March 2025). The gap between exploration and realized value suggests that tool selection matters far less than workflow design.

What Are the Hidden Costs of Single-Prompt AI Content?

A 2025 JMIR Cancer study found that conventional chatbots without retrieval-augmented generation (RAG) hallucinated approximately 40% of the time. RAG with curated sources reduced that to 0% for GPT-4 and 6% for GPT-3.5 (JMIR Cancer, 2025). The comparison between prompt-based and crawl-based AI explores this distinction in depth. Vectara's Hallucination Leaderboard, testing 98 models, found the best-performing model achieved a 0.7% hallucination rate (Vectara, 2025).

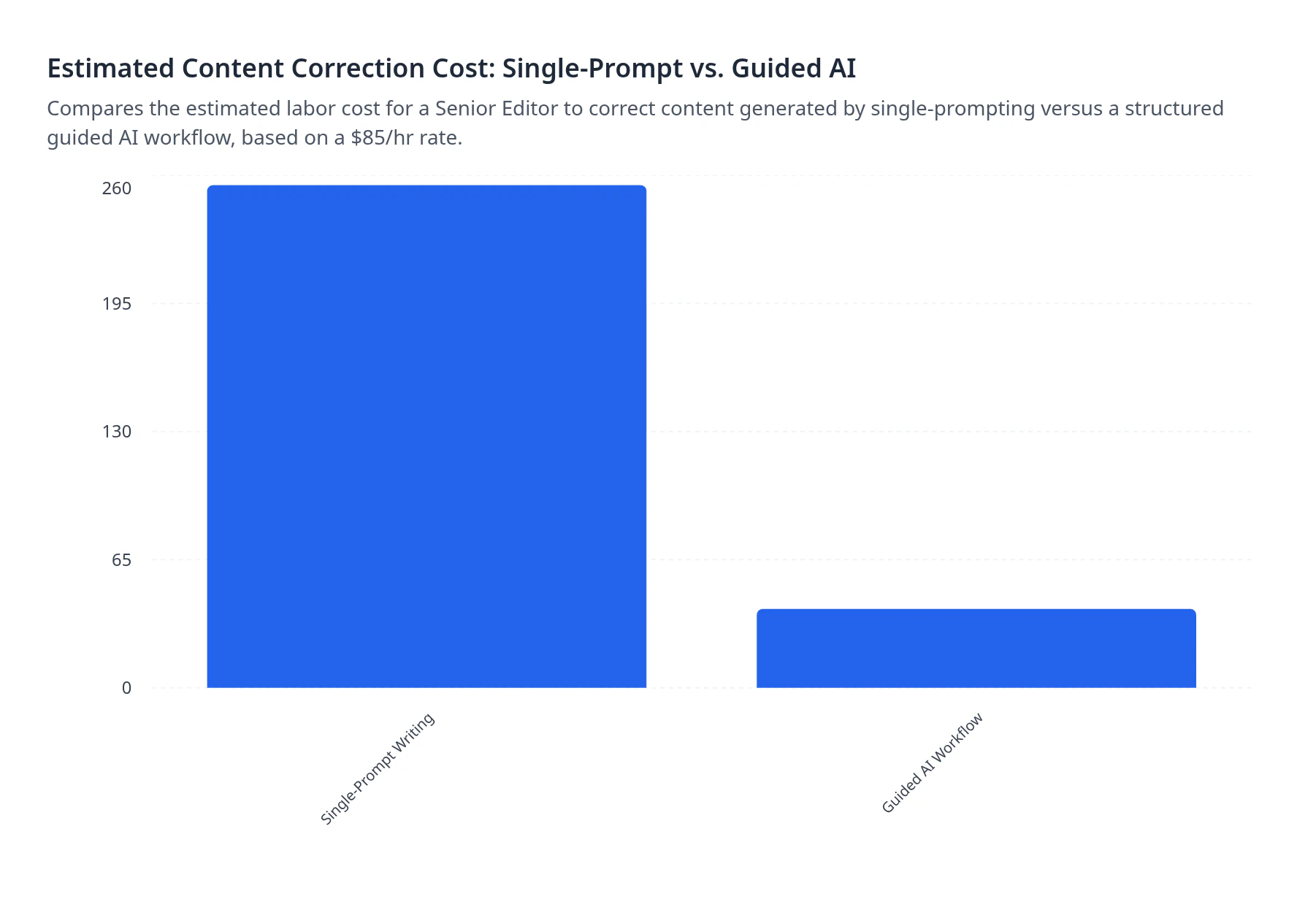

Every hallucination that reaches publication costs editing time to fix. When that editing time compounds across dozens of articles per month, the hidden cost of single-prompt generation becomes the largest line item in content production that most teams never measure.

The correction cost is not theoretical. If a senior editor earning $85/hour spends 2 hours verifying and fixing a hallucination-prone draft, that single article carries $170 in hidden labor costs before it generates any value.

How Does a Multi-Step Workflow Reduce Editing Costs?

HubSpot's 2026 State of Marketing report found that 94% of marketers plan to use AI in content creation, surveying 1,500+ global marketers (HubSpot, 2026). Yet Gartner's finding that only 44% realize significant benefits from generative AI points to a process problem, not a tool problem.

Multi-step workflows address this gap by separating the content pipeline into discrete stages: research, outlining, drafting, and optimization. Each stage receives focused context rather than forcing a single prompt to handle everything at once.

The practical difference matters for ROI calculation. A structured workflow produces a draft that requires fact-checking on verified data points rather than AI-invented claims. It injects live SERP data at the research stage, brand guidelines at the drafting stage, and internal links at the optimization stage. Each injection point reduces downstream editing.

The 50-percentage-point gap between marketers who explore AI (77%) and those who realize significant benefits (44%) maps directly to workflow maturity. Teams using single prompts are exploring. Teams using structured workflows with human checkpoints are realizing benefits.

What ROI Formula Works for AI Content Workflows?

McKinsey found that about 75% of generative AI's value concentrates in four business functions: customer operations, marketing and sales, software engineering, and R&D (McKinsey). For content teams, ROI measurement should focus on the intersection of labor efficiency and content performance.

A practical formula for content teams:

Content Workflow ROI = (Labor Cost Reduction + Organic Performance Value) - Tool and Implementation Cost

The labor component is measurable. Track hours per article across three phases: research, drafting, and editing. Compare these hours before and after implementing a structured AI workflow. The NBER data suggests a realistic 14% time reduction for experienced workers, scaling to 34% for team members new to the domain.

The organic performance component requires patience. Content ROI materializes over 3-12 months as articles index, rank, and compound. A useful proxy: estimate the equivalent paid search cost of the organic traffic each article generates. If an article captures 500 monthly visits for a keyword with a $4.50 CPC, that article generates $2,250/month in equivalent paid value.

Columbia SIPA's warning applies here: avoid claiming precise ROI figures when the data foundation is still forming. Instead, track directional metrics. Are editing hours declining per article? Is organic traffic per published piece increasing? These trend lines matter more than a single ROI percentage.

How Do AI Content Signals Affect Search Visibility?

AI-referred sessions grew 527% between January and May 2025, jumping from 17,076 to 107,100 across 19 GA4 properties analyzed by Previsible (Previsible, 2025). At the same time, organic click-through rates declined 61% in queries with AI Overviews, from 1.76% to 0.64% (Seer Interactive, 2025).

Content structure directly influences whether AI systems cite your content. Answer-first formatting improves AI citation rates. Content containing original statistics was cited 40% more frequently (Onely). Fresh content (under 3 months old) was 3x more likely to be cited by AI (Digitaloft). Meeting these standards is what separates ROI-positive content from the AI slop that Google actively demotes.

These signals have direct ROI implications. Content that earns AI citations generates a new traffic channel independent of traditional organic rankings. The Previsible data shows this channel is growing fast enough that ignoring it creates measurable opportunity cost.

Content without maintenance loses 50% of its citation performance within 12-18 months (Semrush, 2025). This means ROI calculations must factor in ongoing content refresh costs, not just initial production costs.

When Does Human Oversight Drive the Highest ROI?

Google's John Mueller stated in November 2025: "Our systems don't care if content is created by AI or humans. What matters is helpful" (Google). He also called low-quality AI SEO content "digital mulch" in August 2025 (Search Engine Journal) and warned that "just rewriting AI content by a human won't change that, it won't make it authentic."

Google's January 2026 Authenticity Update prioritized Experience signals, the first E in E-E-A-T. Building these signals into every article is the operational challenge. The highest ROI from AI content workflows comes not from generating more content faster, but from freeing human experts to inject genuine experience into AI-assisted drafts.

The JMIR Cancer study reinforces this. RAG with curated sources achieved 0% hallucination for GPT-4, but only when human experts selected and verified the source material. The AI did not curate its own sources. Humans set the quality floor that the AI then maintained.

Human oversight costs time. But the NBER data suggests it costs 14% less time than fully manual work for the same output, a finding confirmed by peer-reviewed time studies comparing manual and AI-assisted research. That 14% is where the ROI lives: not in eliminating humans from the workflow, but in reallocating their time from production to quality assurance, experience injection, and strategic direction.

Frequently Asked Questions

How much does AI actually improve content productivity?

An NBER study of 5,179 workers found AI tools increased productivity by 14% on average, with a 34% improvement for novice workers (NBER Working Paper 31161). A separate NBER study found employed AI users saved time equivalent to 1.4% of total work hours. McKinsey estimated 5-15% marketing productivity gains.

Can you reliably calculate ROI for AI content workflows?

Columbia SIPA research concluded that a reliable ROI framework for generative AI is "not possible at the moment" due to data scarcity and technology immaturity. Gartner found only 44% of marketers exploring AI realize significant benefits. Track directional metrics like editing hours per article and organic traffic per piece instead.

How much do AI hallucinations cost in editing time?

Conventional chatbots hallucinate approximately 40% of the time (JMIR Cancer, 2025). RAG with curated sources reduces this to 0% for GPT-4. Every hallucination requires human verification and correction. At an $85/hour editor rate, a 2-hour correction cycle costs $170 per article in hidden labor.

Does Google penalize AI-generated content?

Google's John Mueller confirmed in November 2025: "Our systems don't care if content is created by AI or humans. What matters is helpful" (Google). However, he called low-quality AI content "digital mulch" and warned that rewriting AI output alone "won't make it authentic."

What content signals boost AI citations?

Answer-first formatting improves AI citation rates. Content with original statistics is cited 40% more (Onely). Fresh content under 3 months old is 3x more likely to be cited (Digitaloft). Without maintenance, content loses 50% of citation performance in 12-18 months (Semrush).

From Productivity Gains to Content Authority

The measurable ROI of AI content workflows lives in a narrow band: 14% average productivity gains, 5-15% marketing efficiency improvements, and near-zero hallucination rates when structured workflows replace single prompts. Content without ongoing maintenance loses 50% of its citation performance within 12-18 months (Semrush, 2025). The teams that measure, iterate, and invest in human oversight are the ones converting AI tools into durable search authority.