Prompt-Based vs Crawl-Based AI for SEO: What the Data Shows

RAG cuts AI hallucinations from 40% to under 6% (JMIR Cancer, 2025). What Cloudflare and Chroma research shows about prompt-based vs crawl-based AI for SEO.

TL;DR: RAG reduces AI hallucination rates from 40% to under 6% (JMIR Cancer, 2025). Crawl-based AI with retrieval grounding outperforms prompt-only approaches for SEO content at scale.

At a Glance

Retrieval-augmented generation (RAG) reduced AI hallucination rates from approximately 40% to 0-6% when paired with curated source data (JMIR Cancer, 2025). Meanwhile, AI crawler traffic rose 18% from May 2024 to May 2025, with GPTBot growing 305% (Cloudflare, July 2025). The distinction between prompt-based and crawl-based AI is no longer theoretical, it determines whether your content ranks or gets buried.

About the Author

Daniel Agrici is Co-Founder at Rankenstein, where he oversees product development and AI-assisted content strategy. With over eight years in technical SEO and content automation, Daniel has led content operations for B2B SaaS companies across fintech, healthtech, and enterprise software verticals. He writes about the intersection of AI tools and editorial quality.

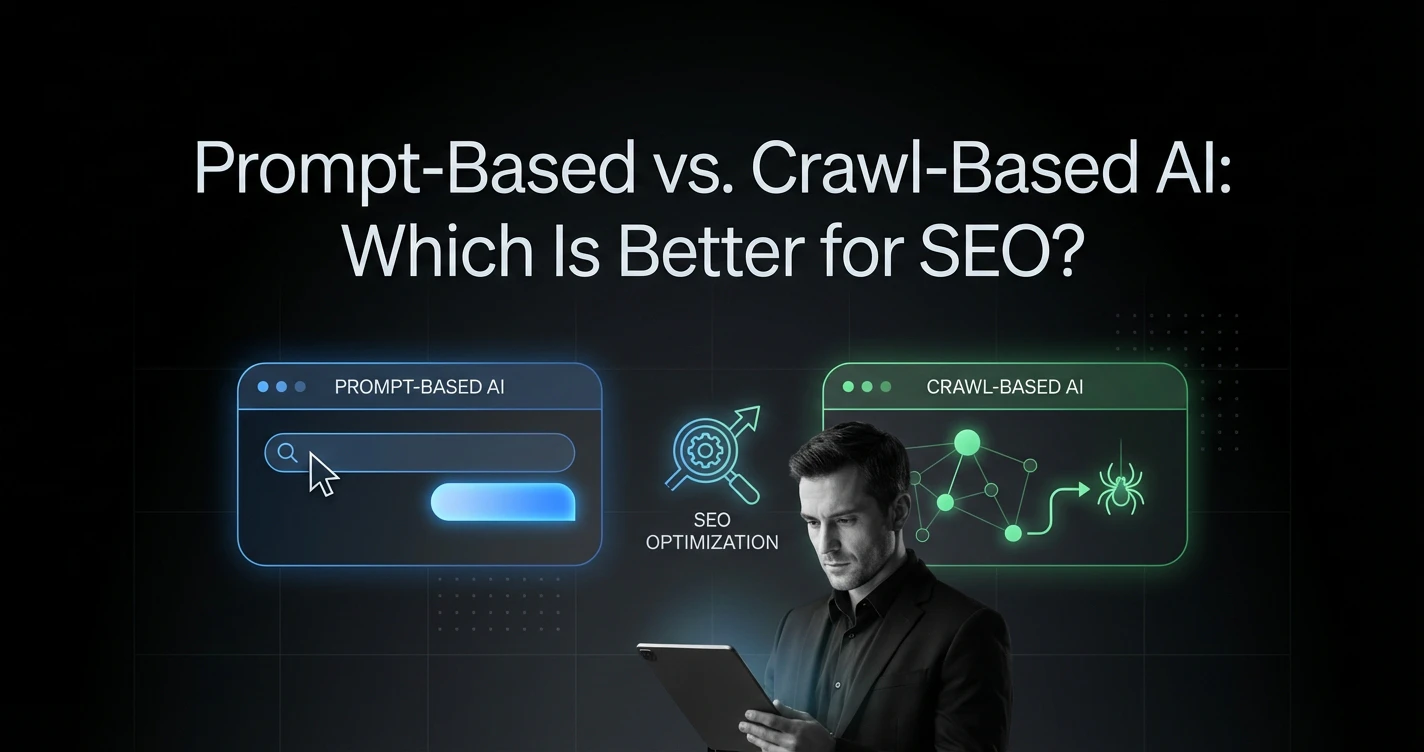

What Is the Difference Between Prompt-Based and Crawl-Based AI?

A 2025 study in JMIR Cancer found that conventional (prompt-only) chatbots hallucinated approximately 40% of the time, while RAG-based systems using curated medical data reduced that rate to 0% for GPT-4 and 6% for GPT-3.5 (JMIR Cancer). The odds ratio for hallucinations in conventional vs RAG systems was 16.1 (P<.001).

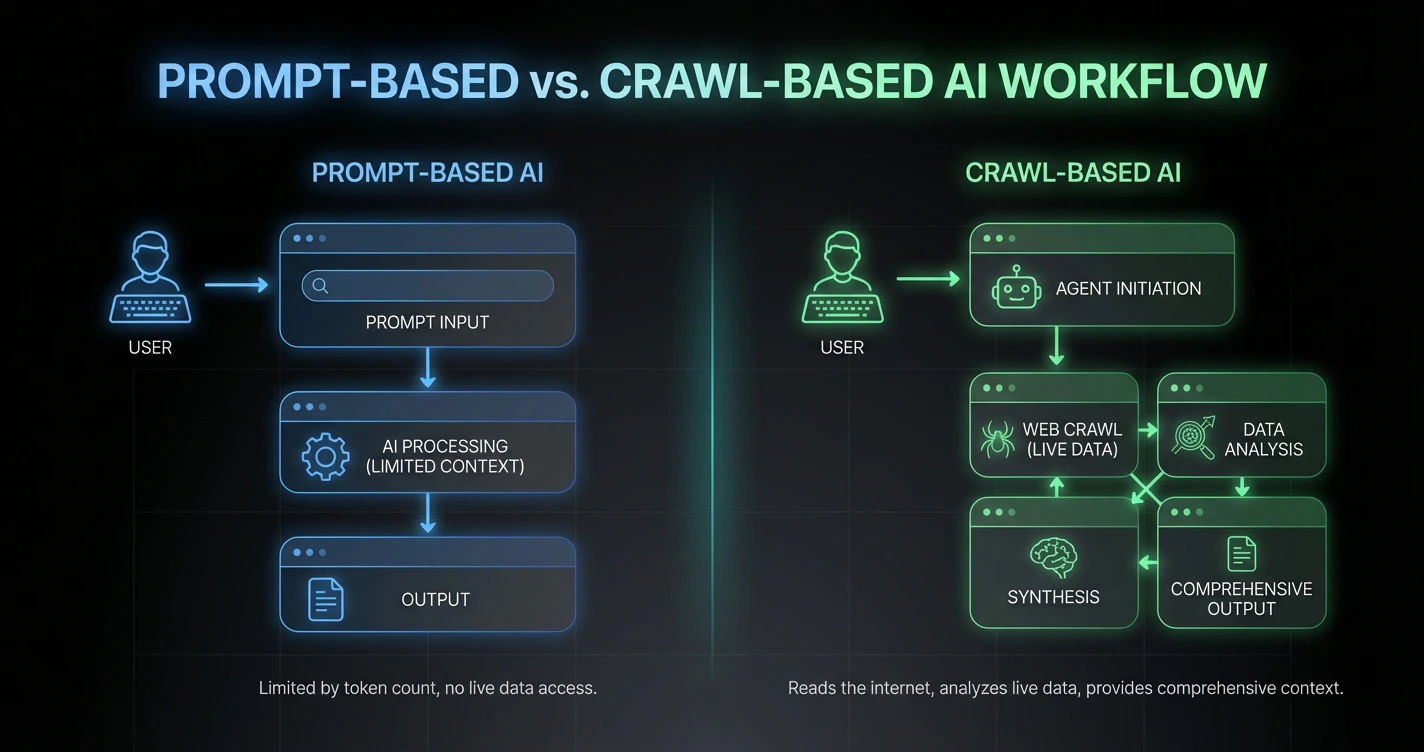

Prompt-based AI generates text by predicting the next most probable token from static training data. It does not know whether a competitor published new research yesterday or whether Google changed a keyword's intent this morning. It operates from a frozen snapshot of the web.

Crawl-based AI (retrieval-augmented generation) sends autonomous agents to retrieve live information before generating content. These agents navigate to current URLs, extract text and structure, and feed that real-time context into the language model. The AI reads the internet before writing a single word.

As noted by Human Security, the ecosystem is rapidly distinguishing between simple scrapers and intelligent agents capable of complex execution. This distinction matters for SEO because it determines whether your AI tool can analyze the current SERP or merely guess at it.

| Feature | Prompt-Based AI | Crawl-Based AI (RAG) | SEO Impact |

|---|---|---|---|

| Data Source | Static training data (cutoff dates) | Live web retrieval + SERP data | Factual currency for rankings |

| Hallucination Rate | ~40% (JMIR Cancer) | 0-6% with curated sources | Protects E-E-A-T trust signals |

| Context | Limited by token window | External retrieval at scale | Full competitor analysis |

| Internal Linking | Manual or hallucinated URLs | Based on live site architecture | Automates link equity flow |

| Freshness | Training cutoff (months old) | Real-time data | Critical ranking signal in 2026 |

How Much Has AI Crawler Traffic Grown?

Cloudflare's analysis of web traffic from May 2024 to May 2025 found that overall crawler traffic rose 18%, with GPTBot growing 305% and Googlebot increasing 96% (Cloudflare, July 2025). ChatGPT-User requests surged 2,825%, and PerplexityBot recorded a 157,490% increase in raw requests.

Googlebot's share of total crawler traffic rose from 30% to 50% in the same period. GPTBot climbed from the ninth most active crawler to the third. Meanwhile, ClaudeBot fell from 11.7% to 5.4% of traffic, and Bytespider (ByteDance) plummeted 85% in request volume.

These numbers confirm a structural shift. AI systems are not just generating content, they are actively reading the web at unprecedented scale. Content that is structured for machine readability gets retrieved and cited. Content that is not gets skipped.

Cloudflare also found that 14% of top domains now use robots.txt rules to manage AI crawlers. For a deeper look at the breakdown between training crawlers, retrieval agents, and search engine bots, their analysis of AI crawler traffic by purpose and industry provides additional context.

Why Do Prompts Fail for Large-Scale Content?

Chroma's "Context Rot" study (July 2025) tested 18 leading language models and found performance degraded 13.9% to 85% as input length increased, even on simple retrieval tasks (Chroma Research). Models achieved near-perfect accuracy at 25-100 words but exhibited significant failures at 10,000+ words.

This matters for SEO because comprehensive content requires extensive context. A prompt-based system analyzing a 5,000-page e-commerce site would need to process far more tokens than any model can reliably handle in a single pass. Even with large context windows, the model does not retain a persistent map of your site architecture between sessions.

The internal linking problem makes this concrete. When a prompt-based AI is asked to "add internal links," it typically invents URLs that do not exist (creating 404 errors) or links to generic top-level pages rather than deep, relevant content. A crawl-based system indexes your sitemap first, queries your actual page inventory, and inserts verified URLs with contextual anchor text.

Screaming Frog highlights how AI-powered crawling can extract structural data from competitor pages, heading hierarchies, FAQ schema, table formats, that prompt-based tools simply cannot see. You cannot optimize for the current SERP if your AI tool cannot read the SERP.

How Does Retrieval-Augmented Generation Reduce Hallucinations?

The JMIR Cancer study evaluated four chatbot configurations: GPT-4 with curated data (0% hallucination), GPT-3.5 with curated data (6%), GPT-4 with web search (6%), and GPT-3.5 with web search (10%). The conventional chatbot without RAG hallucinated approximately 40% of the time (JMIR Cancer, 2025).

RAG works by grounding the language model in retrieved evidence before generating output. Instead of predicting what sounds plausible, the system checks what is actually true according to its source material. The quality of the source data directly determines the quality of the output.

The trade-off is response coverage. RAG-based chatbots in the JMIR study responded to 36-81% of questions (declining to say "I don't know" rather than fabricate), while conventional chatbots answered 100%, but with 40% of those answers containing hallucinations. For SEO, accuracy beats coverage. One hallucinated statistic in a published article can undermine an entire domain's credibility. Comparing manual research against AI-assisted methods reveals exactly where the accuracy boundary lies.

What Content Formats Get Cited by AI Search Engines?

The Omnius GEO Industry Report (2025) analyzed 8,000 AI citations and found that 82.5% linked to deeply nested content pages rather than homepages (Omnius). Product-related content dominated citations at 46-70% of all sources. AI search traffic converted at 4.4x the rate of traditional organic search.

Google AI Overviews now appear in 16% of all US searches, more than double the rate from March 2025 (Omnius). When AI Overviews do appear, they reduce website clicks by 34.5%. Seer Interactive measured organic CTR declining 61% with AI Overviews across 3,119 queries (from 1.76% to 0.64%) (Seer Interactive, 2025).

For content to be cited by AI systems, it must be structured for machine extraction. This means atomic headings where every H2 is followed immediately by a direct answer, structured lists for features and steps, data tables for comparative information, and authoritative sourcing with linked citations. AI agents prefer structured formats over dense paragraphs.

Freshness matters as well. Content less than three months old is 3x more likely to be cited by AI systems (Digitaloft). Generative search results prioritize the most recent valid data. A prompt-based article referencing a 2023 software version will lose to a crawl-based article that references yesterday's release.

Does Internal Linking Automation Actually Work?

Zyppy's study of 23 million internal links across 1,800 websites found that URLs with 40-44 internal links saw 4x more Google Search clicks than URLs with 0-4 links (Zyppy, 2024). However, after approximately 45-50 internal links, traffic began to decline. Anchor text variety, not raw link quantity, was the strongest traffic correlator.

Search Pilot's controlled testing found a 25% uplift in organic traffic for level-two and level-three category pages after strategic internal linking improvements, translating to an additional 9,200 organic sessions per month (Search Pilot).

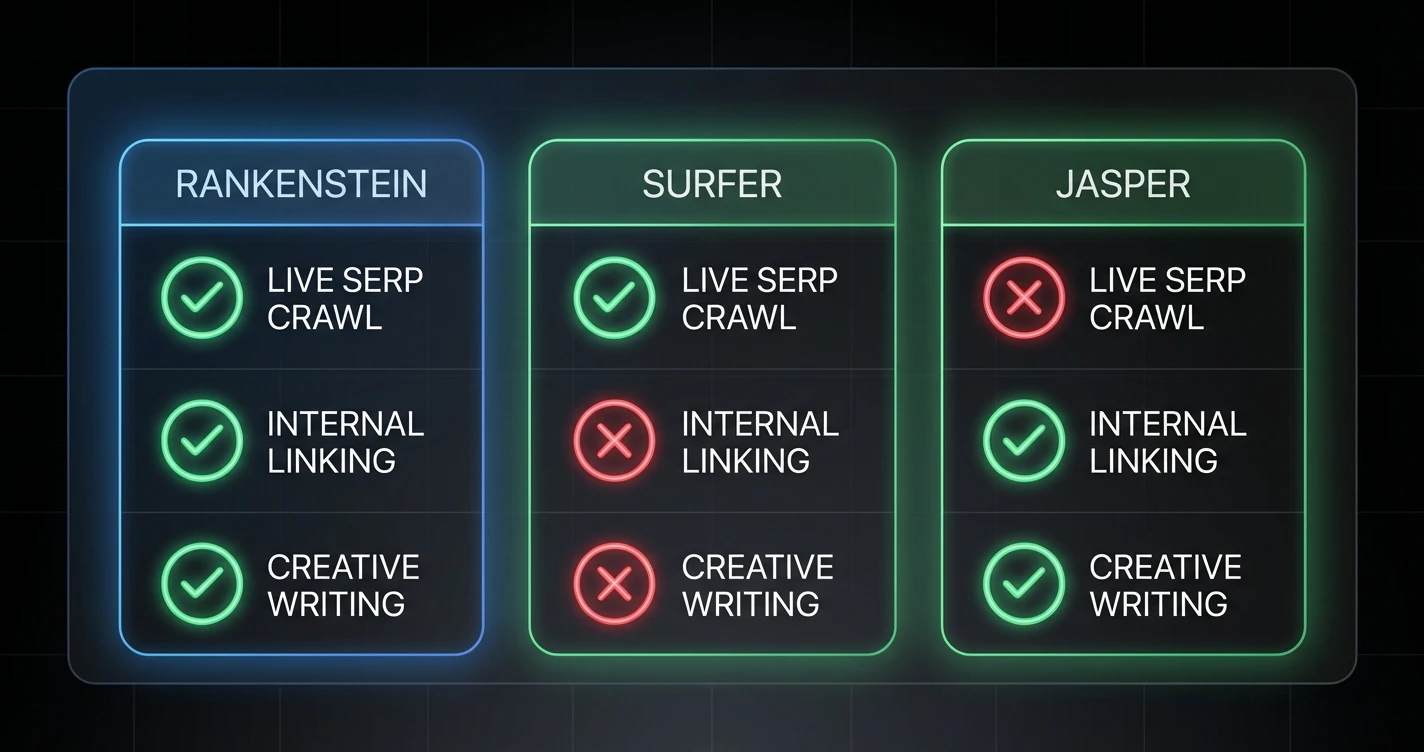

This is where crawl-based AI provides its most practical advantage. A prompt-based system asked to "add internal links" operates from memory. It guesses at URLs, often generating links to pages that do not exist. A crawl-based system indexes the live sitemap, queries the actual page inventory for topical matches, and inserts verified URLs with contextual anchor text.

The internal linking disconnect is one of the most tangible failures of prompt-based content generation. Every hallucinated URL is a 404 error. Every generic link to a homepage is a missed opportunity to distribute link equity to deep content. When links are inaccurate, they can also trigger keyword cannibalization by misdirecting authority to competing pages. The Zyppy data confirms that getting this right, with varied anchor text and accurate URLs, directly correlates with organic traffic.

When Does Human Oversight Still Matter?

Google's John Mueller stated on Reddit in November 2025: "Just rewriting AI content by a human won't change that, it won't make it authentic" (Search Engine Roundtable). He also clarified: "Our systems don't care if content is created by AI or humans. What matters is helpful" (Google).

Google's January 2026 Authenticity Update prioritized Experience signals, the first E in E-E-A-T. This means language patterns indicating firsthand knowledge, specific measurements, original media, and personal anecdotes. Building E-E-A-T signals into every article is how teams operationalize these requirements. AI can generate competent prose, but it cannot generate authentic experience.

Mueller called low-quality SEO content "digital mulch" in August 2025 (Search Engine Journal). His guidance: "I'd approach it as essentially starting over with no content, and consider what it is that you want to do on the site." Mechanical rewriting of AI content does not recover rankings. Sites must fundamentally reconsider their value proposition.

The practical framework is: use crawl-based AI for research, data gathering, and structural analysis. Use language models for synthesis and drafting. Use humans for verification, experience injection, and voice calibration. Content that passes through all three layers earns both rankings and trust — and retrieval-grounded AI is the engine of the broader AI content workflow that ties those layers together.

Frequently Asked Questions

What is the difference between prompt-based and crawl-based AI for SEO?

Prompt-based AI generates content from static training data and cannot see the current web. Crawl-based AI (retrieval-augmented generation) sends agents to retrieve live SERP data, competitor content, and site architecture before generating content. A 2025 JMIR Cancer study found RAG reduced hallucination rates from approximately 40% to 0-6%.

Does retrieval-augmented generation actually reduce AI hallucinations?

Yes. JMIR Cancer (2025) tested four RAG configurations and found GPT-4 with curated source data achieved 0% hallucination rate, compared to approximately 40% for conventional chatbots without RAG. The odds ratio for hallucinations in conventional vs RAG systems was 16.1 (P<.001).

How fast is AI crawler traffic growing?

Cloudflare reported overall crawler traffic rose 18% from May 2024 to May 2025. GPTBot grew 305%, ChatGPT-User surged 2,825%, and PerplexityBot increased 157,490% in raw requests. Googlebot's share of total crawler traffic rose from 30% to 50%.

Can AI-generated content rank in Google search?

Google's John Mueller confirmed in November 2025: "Our systems don't care if content is created by AI or humans. What matters is helpful" (Google). However, he called low-quality AI SEO content "digital mulch" and warned that rewriting AI content alone "won't make it authentic."

What content formats are most likely to be cited by AI search engines?

Answer-first formatting increases AI citation likelihood. Content with statistics has a 40% higher citation rate (Onely). Content less than three months old is 3x more likely to be cited (Digitaloft). The Omnius GEO Report found 82.5% of AI citations link to deeply nested content pages rather than homepages.

From Convenience to Accuracy

The choice between prompt-based and crawl-based AI is a choice between convenience and accuracy. Content without maintenance loses 50% of its citation performance within 12-18 months (Semrush, 2025). Building systems that combine live data retrieval, language model synthesis, and human verification is what separates content that ranks from content that fills space.